Activated Data Management: Data Fabric and Data Mesh, Key differences, How they Help and Proven benefits from their coexistence.

Aggregated view of Data Fabric and Data Mesh from various sources online

In a constantly changing data landscape that demands new views, aggregations, and projections of the data.

Discovering the right data and getting hold of it on time, is one of the major issues I have observed in organizations.

From data warehouses to data lakes, organizations are storing data from all over the place typically in a central location creating monolithic or a location-specific to each line of business creating data silos, aiming to get better decision making, improve performance, new analyze trends, etc.

The amount of data created in 2020 was 64.2 zettabytes — around 64 billion one terabyte hard drives worth of data.

Storing data is great but trusting and getting hold of quality data on time is absolutely critical to business success.

In enterprises where multi-level approval and process are in place due to hierarchal and traditional philosophy, data silos or centralization has thwarted the business agility and time to market. Making it difficult to react timely to dynamic market needs.

Requirements like customer 360 reports on aggregated data have become time-consuming and error-prone because the team managing the data has no bandwidth and relevant understanding of data for the specific ask.

The key to solving this challenge is data de-centralisation with an ownership model and having a common framework to tie various datasets together.

With that in mind, new flexible concepts like data mesh and data fabric have emerged, to scale the delivery of the right data to satisfy diverse use cases and most importantly, provide the agility to respond quickly to changing business needs while working with enterprise data in a hybrid multi-cloud ecosystem.

Before we dig deeper into Data Fabric and Data Mesh approach, let’s go through some of the problems organizations are facing right now.

Problems your organization is facing right now.

- Siloed data. The bulk of raw data that is only accessible by a line of business but isolated from the rest of that organization, has resulted in a severe lack of transparency, efficiency, and trust within that organization. It makings the job of C-level executes very hard to consolidate all data of the business to create a 360-degree view.

- The complexity of data integration prevents organizations from maximizing the value of and fully leveraging their data. Traditional data integration is no longer sufficient to meet business requirements such as universal transformations, real-time connectivity, etc. Integrating, processing, and transforming organizational data with data from multiple sources is a challenge for many organizations.

- Data identification authentication. Since data is spread across various systems or stored in a monolithic data lake with a team maintaining it, having no knowledge of its relevance, has caused data authenticity issues. It has also created data knowledge ambiguity. For example, the analysis staff has multiple, self-contained systems that use different names for IDs. This is causing persistent friction in your organization, constant cross-checking and filtering are required, every time you need data for specific needs for example customer revenue reports, etc.

- Surrogate Data. The accuracy of the statistics in a report depends primarily on the data selected and its quality. When the selected data are not found across these self-contained systems, there is a tendency to substitute surrogate data. This is what affects the report’s accuracy.

- Multilevel Approvals. In an organization with hierarchal and traditional philosophy multi-level approval just to get data has a direct impact on time to market for business.

- Combining data from multiple lines of business to create a 360-degree view. Data spread across multiple silos or stored in a central data lake using various technologies for integration is challenging. Not only integration but data integrity is also questioned because data is usually maintained by teams who don’t understand the relevance of the data for the specific line of business.

- Offline data. The proliferation of data with various data sources and in fact in bigger organizations data is even stored in EUCs due to which they have always required specialized analysts to spend an inordinate amount of time in cleansing, organizing, and aggregating data in a manual manner.

- Data Find-ability / Discovery. Struggle with a breakdown in communication between data experts, business leaders, and their teams who rely on data analyses to do their jobs. There is no ability to discover as well as analyze patterns and trends within data sets to enable businesses to provide themselves with a competitive edge.

Let’s understand what data fabric and data mesh approaches suggest.

What is a data fabric?

A data fabric is a queryable data layer making data available via purpose-built APIs (optionally also via direct connection to the data stores for those tools that don’t support APIs).

Gartner defines a data fabric as “a design concept that serves as an integrated layer of data and connecting processes. A data fabric utilizes continuous analytics over existing, discoverable and inferenced metadata to support the design, deployment and utilization of integrated and reusable datasets across all environments, including hybrid and multicloud platforms.

Data fabric is a single environment consisting of a unified architecture with services and technologies running on it, that architecture that helps a company manage its data. It enables accessing, ingesting, integrating, and sharing data in an environment where the data can be batched or streamed and be in the cloud or on-prem.

It is the compute layer where the data fabric connects otherwise disconnected silos and systems.

At the core of data fabric is the intelligent analysis of metadata supporting a smarter system of integration among the varied sets of data tools, enabling trusted and reusable data to be leveraged by the widest possible group of consumers — humans and machines alike.

The objective data fabric is to address the main pain points in some of the big data projects, not just in a cohesive manner but also by operating in a self-service model.

What is a data mesh?

According to Forrester, “A data mesh is a decentralized sociotechnical approach to share, access and manage analytical data in complex and large-scale environments — within or across organizations using.”

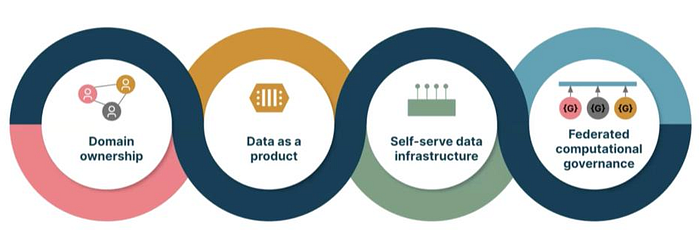

Data mesh is data infrastructure as a platform, which provides storage, pipeline, data catalog, and access control to the domains. It is a decentralized approach to data architecture. It breaks data into distinct ‘data products’ that are owned and managed by the domain teams closest to them.

Data mesh promotes a culture of about connecting people, creating empathy, and creating a structure of federated responsibilities. This way, generating business value from data can be scaled sustainably.

It takes a more people-and process-centric view, forgoing technology edicts and arguing for “decentralized data ownership” and the need to treat “data as a product”. This approach overcomes the bottlenecks and disconnects that are typical of data lake and data warehouse environments. Disconnects that arise as data engineers play middlemen between data producers and consumers.

Its key idea is to apply domain-driven design and product thinking to the challenges in the data and analytics space.

Key Differences between Data Fabric and Data Mesh

According to Noel Yuhanna, an analyst from Forrester, the major difference between the data mesh and the data fabric approach is the way the APIs are processed.

- Data fabric is essentially the opposite of data mesh, where the developers will be writing code for the APIs to the interface of the application. Unlike the data mesh, data fabric is a no-code or low-code method, where the API integration is executed in the fabric without leveraging it directly.

- Data fabric is more technology-centric while data mesh is more dependent on organizational change.

- Data fabric products are mainly developed on production usage patterns, whereas data mesh products are designed by business domains.

- The Discovery of metadata is continuous, and the analysis is an ongoing process in the case of Data Fabric, while in the case of data mesh the metadata operates in a localized business domain and is static in nature.

- From a deployment standpoint, data fabric harnesses the current infrastructure facility available, whereas data mesh extrapolates the current infrastructure with new deployments in business domains.

- Data mesh advocates product thinking for data as a core design principle. As a result, data is maintained and provisioned like any other product in the organization with a data mesh. Where data fabric leverages automation in discovering, connecting, recognizing, suggesting, and delivering data assets to data consumers based on a rich enterprise metadata foundation (e.g., a knowledge graph), data mesh relies on data product/domain owners to drive the requirements upfront for data products.

- Data mesh is more about people and process than architecture, while a data fabric is an architectural approach that tackles the complexity of data and metadata in a smart way that works well together.

Benefits from the coexistence of concepts from data mesh and data fabric to organization

- Provides data owners data products creation capabilities like cataloging data assets, transforming assets into products, and following federated governance policies.

- Enable data owners and data consumers to use the data products in various ways such as publishing data products to the catalog, searching and finding data products, and querying or visualizing data products leveraging data virtualization or using APIs.

- Use insights from data fabric metadata to automate tasks by learning from patterns as part of the data product creation process or as part of the process of monitoring data products.

- When it comes to data management, a data fabric provides the capabilities needed to implement and take full advantage of a data mesh by automating many of the tasks required to create data products and manage the lifecycle of data products.

- A data fabric gives you the flexibility to start with a use case allowing you to get quick-time-to-value regardless of where your data is.

- By using the flexibility of a data fabric foundation, you can implement a data mesh, continuing to take advantage of a use case-centric data architecture regardless if your data resides on-premises or in the cloud.

- Experts from both worlds agree to have a self-serve data platform.

- Both data fabric and mesh enable people to use and reuse data by making the most valuable assets the most visible for wider use.

- Both promote the use of Metadata. In data mesh, we have a domain-driven design, and in data fabric, we have activated metadata.

If data fabric is about getting data to the right place, data mesh gets that data to the right place with the right context.